Computer Science

Computing History Displays: Fifth Floor - Magnetic Data Storage - Magnetic Disk Storage

The history of magnetic disk storage for digital data has a very precise beginning. The first such storage was the result of an undertaking by IBM to develop, at their new (1952) San Jose (California) laboratories, both a disk store, the IBM 350, and a computer system that was based around the use of on-line storage, the RAMAC (Random Access Method of Accounting and Control) in 1956. For the first 20 years of development of disks they were very much an IBM story. Although there were later other important players, IBM was always a leader in pushing the disk technology until it sold its disk business to Hitachi Data Systems in 2003.

The final IBM 350 disk storage had 50 24-inch diameter double sided disk platters on one spindle rotating at 1200 rpm. There was one dual-head mechanism that was inserted between disks to read the disk above or that below - the head had to be retracted and moved vertically to the appropriate gap when there was a change in the surface being read. One problem with large rotating disks is that they wobble (called run-out.) To get the head consistently near enough to the surface it was necessary to force it closer using air under pressure pumped to the head. The head was stopped from contacting the disk surface by a jet of air from the head forming an "air bearing" that caused the head to float like a hydrocraft at 800 micro inches - 20 micro metres. This allowed the IBM 350 to store 100 bits per inch with tracks spaced at 20 per inch. Assembled with covers, the 350 was 60 inches long, 68 inches high and 29 inches deep. It was configured with 50 magnetic disks containing 50,000 sectors, each of which held 100 alphanumeric characters, for a total capacity of 5 million characters.

Dividing its capacity by its volume we can see that the first disk stored data at about the same density as the early drums, although of much greater capacity. Compared to a drum memory, which essentially has a 2 dimensional surface for storage, a disk device makes better use of space by stacking disks in 3 dimensions. Consequently disk storage was subject to more-intense development and improvement than were drums, leading to disks quickly replacing drums in their applications.

The modern disk remains a mechanical device and is recognizably a descendant of the 1956 IBM350. The improvement over 50 years has been obtained by continually refining all aspects of the technology. There have been many individual improvements, too many to mention all, but we will try to cover the main steps.

Much of the early development of disks was from IBM or by ex-IBM employees around Silicon Valley in California. The IBM 350, although introducing disks and air bearings, was very much a "first attempt." The next IBM store, the IBM 1301, has more in common with the modern disk. Although having 25 disk platters per spindle, the 1301 gave up on moving the head between surfaces and had a "comb" of heads, one for each surface, which were moved in unison, only one of the 50 being active (The IBM 1301 had two 28 MB modules each with 25 disks and 50 sliders. It rotated at 1800 rpm with seek taking 165 ms average. There were 50 tracks per inch with 520 bits per inch stored along each track - 13 times the IBM 350 density.) All disks since have had comb heads (even if with only one tooth!) - sometimes more than one head.

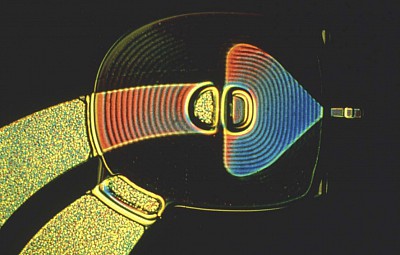

The other significant change was the "self acting slider" - the heads did not have to be supplied with compressed air for the air bearing but this was formed by the hydrodynamic profile of the head. The heads were forced down on the disk surface mechanically and to avoid damage the heads had to be parked off the surface at "power-on" until the disk picked up sufficient speed for the relative motion of the head to allow the head to "float" above the disk surface. Such "self acting hydrodynamic sliders" have been used ever since. They allow the heads to be much closer to the disk surface and thus allow closer spacing of the bits in the track. Since the IBM 1301, there has been a steady improvement in the design of sliders, involving making them smaller and smaller, and a corresponding need for the disk surface to be made smoother and flatter to allow the head on the slider to get closer and closer to the surface - down to less than 5 microinches today.

The problem with disk stores such as the IBM 1301 was that they were of relatively low capacity yet extremely expensive. Their use in any but the largest computers would require lower expense yet that could only be achieved by reducing the capacity. A solution to this conundrum was to make the set of platters removable and interchangeable. The first IBM "disk pack" introduced as the IBM 1311 in 1963 had a capacity of just 2 MB, each pack with 6 double-sided platters. The follow-on version for the System/360 increased the capacity to 7.25 MB. The disk pack and its interfaces were published standards so there were many vendors of disk packs and even disk drives. It also set the standard disk diameter of 14 inches that was to last for 20 years.

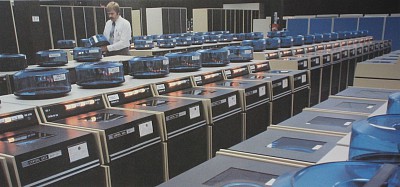

The disk pack was adopted by other vendors and became a widespread approach to storage, especially with smaller computer systems. Disk packs were made in a variety of sizes and formats. At one extreme were the simplest packs which had only one platter and tended to be called "cartridges." At the other extreme, IBM introduced a multiple drive storage (the IBM 2314) that used 8 standard sized packs to come up with a total disk storage in one unit of 233MB. This finally began to provide at reasonable cost an on-line storage capacity that would satisfy the needs of on-line applications. In the early 1970s computer rooms came to be dominated by "farms" of disk pack drives with drives and disk packs made by many different companies. Although very successful, disk packs were not without their problems as may be seen in this booklet from Control Data Corporation - The Disk Pack Garden of Verse.

There is a serious problem involved in moving the heads to a particular track - this applies to all disks, though to disk packs in particular. How is the track identified? Up until the mid-sixties heads tended to be moved mechanically or hydraulically and the location of the track determined by a mechanical indent or stop. As tracks got closer together the variation in geometry from pack to pack made it difficult to reduce the spacing between tracks while still being able to locate tracks precisely.

The modern solution to this problem was introduced in the IBM 3330 for use with the IBM System/370 in 1970. With the IBM 3330 the head assembly is movable to arbitrary positions by means of a current-carrying coil inside a fixed magnet (coiled a "voice coil" for use of the same effect in loudspeakers (where the magnet moves inside a coil.)) One of the disk surfaces is initialized with special timing signals that can be sensed by the control hardware and used to find each track and deliver a servo signal that can be used to keep the head on track. The start of each block begins with a permanently recorded address so that control hardware can distinguish the tracks and blocks from each other.

The disk head arm can be set moving by initiating an electric current in the voice coil - the arm moving inwards or outwards, depending on the current direction. If the current is steady the arm will accelerate but will soon slow to a constant speed because of opposing currents introduced by the movement of the coil. With the current stopped the coil will stop moving. To move from track to track the disk controller has to operate the current for the appropriate time to make the head skip the required number of tracks, at the same time sensing tracks as they pass. Once a track is located the head assembly isn't fixed in position (although the coil resists movement because of the induced currents) but has to be continually "jigged" with small changes of current to keep it on the correct track. This was all pretty impressive considering that control logic at the time was expensive - this was before the invention of the microprocessor.

The IBM 3330 was another industry standard with a capacity of 100Mbytes per pack (later 200MB,) now 389 times better than the IBM 350. The voice coil technique is universal in modern disks, though most do not have special tracks for the servo signals but have small bursts of servo signal recorded between each block of data.

Removable disk packs dominated disk storage in the late 1960s. The high capacity of the multiple-disk devices allowed important data bases disk packs to remain permanently on their drives. The amount of stored data continuously increases so there is always demand for disks of larger capacity. However, the required height of head flight above the disk surface is such that removable disk packs are just not clean enough (free of dust, smoke etc.) to make technological progress. Additionally, variations in dimensions and properties from pack to pack restrict inter-track densities. Solution to these problems required that the disk platters and heads be sealed together as an enclosed permanently-clean unit.

The first IBM disk to be sealed gave its internal project name to this class of disk for the future. IBM had a project for a lower-end drive that comprised two 30MB drives - 3030 is also the number of the Winchester rifle, so the IBM 3040 was called the Winchester. As well as being sealed and having low-mass, lightly-loaded heads, the Winchester disks had lubricated surfaces on which the heads rested when the disk was not in use - previously the heads had to be lifted and parked off the disk to avoid damage at start up when the disk speed was not great enough for the heads to float - this was not compatible with a lower flight height (Interestingly, in modern disks the heads are so close to the surface that they are inclined to stick - this has required that heads are again parked when the disk is not rotating.)

The IBM 3340 had one strange feature that the disks were removable but the disk cartridge included the heads within the module. The next high-end disk from IBM, the IBM 3350, gave up on removability which was never again used with advanced disks. The IBM 3350 offered 317MB per spindle - 1538 times more dense than the IBM 350 - and became the design point for the first half of the 70s.

From the early 1960s most disks had platters 14 inches in diameter. This became a standard size for the high-end disks for over twenty years. The high point for the 14 in. disk came with the IBM 3380 (1981) with 9 platters and the breaking of the 1GByte barrier with a capacity of 1260 Mbytes. This device was also housed in the tallest largest cabinet ever used for a disk - truly the pinnacle of large disk development. The IBM 3380 continued in different versions until 1987 with the 3389K drive of 3781 MB capacity.

High-end disks needed a large diameter to gain the capacity needed for on-line data-bases. For less demanding markets the head technologies developed for the larger disks made smaller disks viable - these started to be introduced in the 1980s. IBM produced the IBM 3310 disk storage with 8-inch drives in 1979. The next year Seagate Technology introduced a 3.5in drive - the ST 506 - specifically intended for personal computers.

Making devices smaller was the beginning of a virtuous cycle of feedback. Large diameter platters consume more power - they say proportional to RPM to the 2.8 power times diameter to the 4.6 power! Smaller platters also have better properties of rigidity and vibration which makes it possible to achieve greater densities than with wider platters. The result is that smaller disks are much more competitive in price per bit than large drives so that it becomes a better solution to capacity to have multiple small drives. For example IBM replaced its 3380 with the 10.8 in. 3390 with a slightly larger capacity but with double the areal density of 62.6 MB/in.

This happy circumstance led to a succession of new disks, gradually shrinking to smaller sizes physically as the capacity grew. At present the highest capacity disks are 3.5 inches in diameter, but new markets have been opened up with hard disks for personal computers, for notebooks, and now for personal entertainment devices - the smallest disks now occupy less than 0.5 cubic inches of space with diameter less than one inch.

An interesting example of the tradeoff between single large and multiple small disks was the introduction in the 1990s of the RAID concept. The idea behind Redundant Array of Inexpensive Disks was that cheaper disks were available but not as reliable. However, multiple cheaper disks could be made reliable and competitive by managing a set of disks with one controller and spreading information across the disks in a redundant way so that errors could be corrected - in the extreme making multiple copies of each file, but with many clever less-costly variations. In fact small disks are not less reliable than larger disks and any system of integrity must have redundant backup for "grand disasters" in any case. However, the standard disk setup for large servers is now an array of small disks, multiple thousands of them, with total capacities in the PetaByte region.

In order to gain improvements in density it is necessary to make the magnetized bits smaller and pack bits together much more closely. This has required the miniaturization of the head and its windings, and improvement in the flatness of the platters. IBM introduced "thin-film" heads in its IBM 3370 disk - the head and their windings are "printed" using techniques developed for high-density circuit boards. The materials used in platters have been made thinner and more-rigid, in some cases being made of ceramic (smaller diameter platters experience much less centrifugal force.) Platter coatings were once applied as a "paint" that was spread by rotation but these are now replaced by "thin films" applied by plasma sputtering in a vacuum - a technique developed for the manufacture of integrated circuits. The material science behind platter coatings is now extremely sophisticated.

As the size of bits decreases there is a problem with sensitivity of reading. The inductive signal depends on the strength of magnetization and speed of rotation. Although small disks are made to rotate faster the strength of signal limits progress. To overcome this, for sensing the magnetization, other physical phenomena have been drawn upon. The most widely-used now is magneto-resistive sensors that detect magnetization by a change in the resistance of the circuit. More recently a quantum effect called Giant Magnetoresistance or "spin valve" is drawn upon, and other phenomena are being considered. (See Scientific American, July 2004.)

The first disks, even ones as advanced as the IBM 3350, were from an era where electronic logic to control disks was very expensive - this led to the goal of disks being simple with reliance on a shared controller or the CPU itself for sophisticated control. All the details of control were centralized, other than the transfer of the data characters themselves.

With servo-tracking heads it is necessary for the disk device to recognize the address at the start of a track - this is just a simple matching of the bit pattern of the desired sector number with the number being read. With centrally controlled disks from IBM the computer would be informed of a match and would be required to command the disk, in real time, to read or write the following sector. This was a severe limitation on the speed of operation of the control mechanism.

However, the opportunity presented itself of locating information by matching a data field other than the address. IBM introduced the count/key data (CKD) format that was used for many years - once a track was located the track could be selected by matching the Key rather than the Count (address). This led to an important approach to data layout called the "indexed sequential access method." There were also proposals to take this idea further and there was at least one product - the CAF or Content Addressed File store from ICL - where the disk store was referenced entirely by key rather than address.

Apart from this deviation (which was of limited use because of the possible smarter ways of organizing data other than in simple lists) the trend has been to put more and more processing power close to the disk but keep the disk storage format as simple as possible. The processor now asks the disk to read or store data at a disk address - the rest is up to the disk itself. Nowadays data is stored in fixed-sized blocks preceded by an identifying address. At one time disks were divided into cylinders and sectors containing equal numbers of bits - the inner tracks stored data more densely than the outer tracks. Now data is stored in the same density on all tracks with more blocks in the outer tracks - this can give a 50% capacity boost with small diameter disks.

Increased processing power, provided by special circuitry and micro-processors, has made a huge improvement to disk controllers. It is very difficult to pack bits closely together without increasing the rate of read errors to unacceptable levels. A major development was the introduction of error-correcting codes that can handle bursts of errors, corresponding with small faults on disk surfaces - "Reed-Solomon" codes and others. Another development is the use of signal processing and logic to match the read pattern for a group of bits to determine with maximum likelihood (PRML) just what the bits are.

As well as logic, cheaper and larger random access memory has improved disks. Nowadays disks commonly contain a buffer or cache memory so that recently accessed items, or adjacent items, are obtained from the high speed memory rather than from the disk surface. The disk controller can separate transmission from reading, allowing the speed of transfer to be divorced from the speed of rotation of the disk.

Modern small disks are clearly descendants of the IBM 3350 in many of their visible features, but there are a couple of obvious visible differences.

The early disk arm assemblies moved in and out using a linear motion. At some stage there was a change to placing the arm assembly on a pivot and rotating the arm, causing the heads to move from track to track. This requires less mass to be moved and fits nicely in the corner of a rectangular package.

Early disk drives used an external motor to rotate the disk, usually with a belt connection. As disks became smaller this motor was replaced by one on the same axle as the disk itself, the motor being integrated into the package, directly rotating at the same speed as the platters. This direct connection and the smaller diameter of platters has led to an increase in rotation speed to 10,000 rpm and beyond in recent years, after having remained unchanged for a long time. The speed of rotation is adjusted dynamically under microprocessor control. A recent change has been the replacement of ball bearings with smoother oil-lubricated bearings that reduce noise and run-out.

At the time of writing for this display the largest disks in the 3.5 in. format had capacity of about 500 GBytes. Forecasters were predicting that 1 TByte disks are not far away and, indeed, at the time of "on-lining" in 2009 disks of 2TByte were available (4Tbyte at time of last update in 2011). But the question remains as to how far disk density improvements can be continued.

One expensive step in manufacturing current disks is writing the servo information. This is done before disk assembly because servo information is written in a different way, offset, to ordinary bits. (It is actually very hard to find out exactly how disks work and are manufactured because many aspects are competitive "trade-secrets.") One possible improvement is to include the ability to write the servo information in each disk - this will allow closer spacing of bits which is the aim of the game. Other proposals are to burn servo info using lasers and sense that information optically.

Currently bits are stored with a magnetic bar that is about 12 times longer than wide. Reducing the relative width offers another step to make bits smaller and pack them closer together. Another proposal is to heat the recording surface with a laser to make it easier to change the magnetization.

A long heralded change has been a move to vertical recording - with a very thin film of magnetic material, this offers the possibility of reduction in bit size. It was investigated by IBM in the late 1950s but finally made its appearance in real products in 2005, and is now ubiquitous.

Everybody seems to agree that disk improvements will continue into the low TByte range, but beyond that? One problem is that as the size of bits decrease random quantum fluctuations in magnetization come into play and increase error rates. All the same, many are predicting 100 TBytes on your home computer, though some are questioning the implications of this amount of storage. What will it be used for? How will it be backed up?

Note that recently the target market for hard disks has changed from computers of all sizes and become focused on the personal entertainment industry. The need for digital music storage has led to the development of tiny hard disks, for use in cameras even tinier. At the high-end the big application for hard disks is becoming storage of hundreds of hours of video in "set top boxes" and, eventually, on-line storage of the personal video library! Flash memories are making inroads into the market for hard disks but as long as they have capacity and cost on their side the development of hard disks will continue apace.